AI Search Visibility Benchmarks 2026: Metrics + Targets

- AI search visibility benchmarks define how AI systems represent your brand. They are target ranges and trends that measure where, how, and whether your brand appears in AI-generated answers, not rankings or traffic.

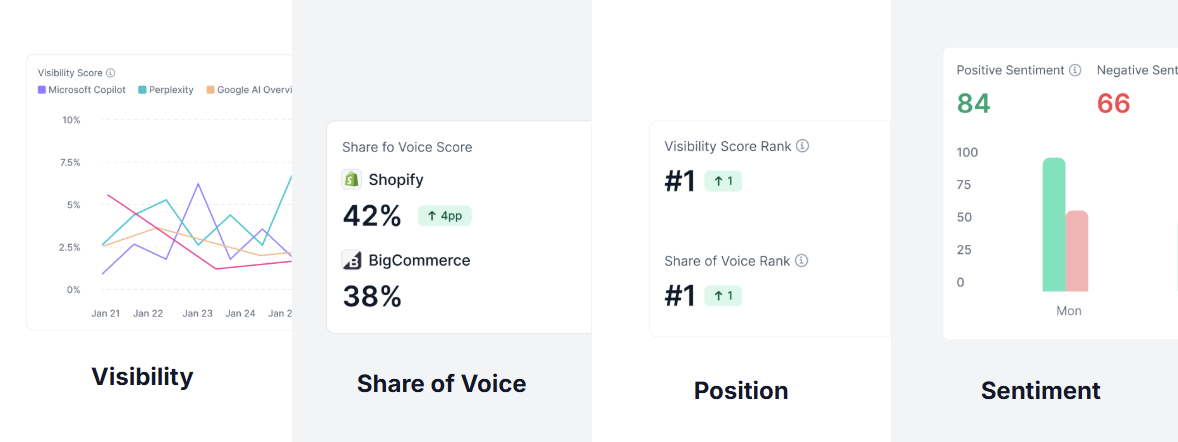

- Benchmarking AI visibility relies on four core metrics. Visibility score, Share of Voice, position, and sentiment show presence, competitiveness, prominence, and framing in AI search results.

- Benchmarks are only meaningful when measured consistently. Using a fixed set of prompts, platforms, and a baseline snapshot makes AI visibility comparable over time and actionable to improve.

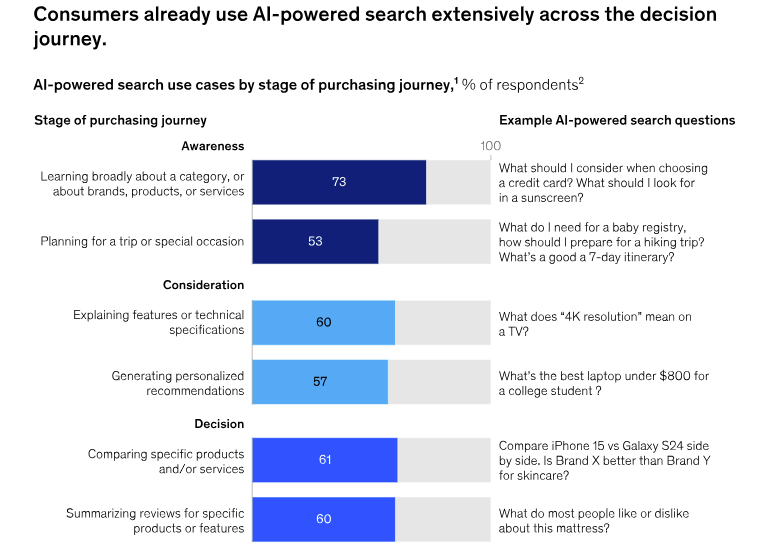

When an AI-generated summary appears, users are significantly less likely to click through to other websites, according to Pew Research.

That shift means visibility inside AI answers matters just as much, if not more, than traditional search performance.

AI search visibility benchmarks measure how often, where, and how AI systems represent brands and agencies.

In this guide, we define AI search visibility benchmarks, the metrics to track, and the benchmark ranges to aim for. You'll also learn how tools like WorkDuo can help you build search visibility benchmarks in 2026.

What Are AI Search Visibility Benchmarks in 2026?

In 2026, AI search visibility benchmarks are target ranges and trend goals that show how often and how consistently AI systems represent your brand, products, or services.

AI prioritizes building brand recall, while conversions often occur later through follow-up searches or direct visits.

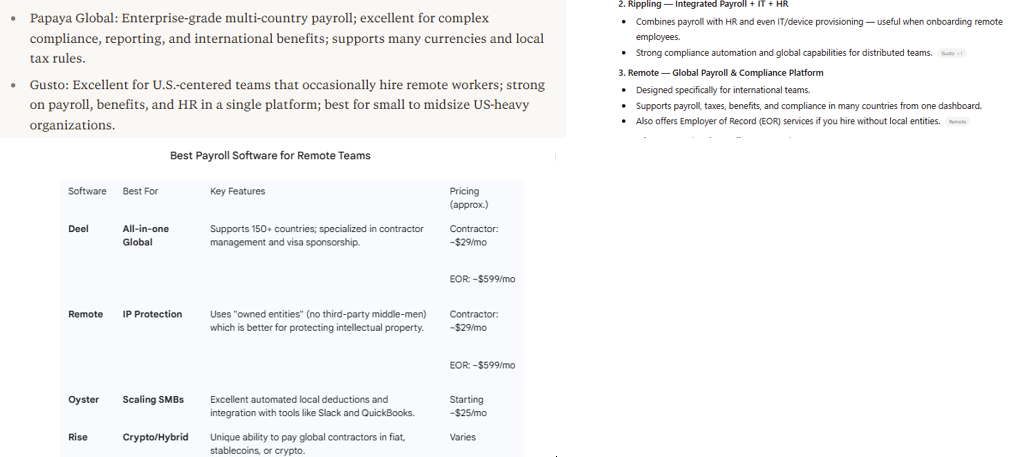

For example, in queries like “Best payroll software for remote teams,” AI responses often surface the same two or three brands repeatedly, shaping preferences before any clicks occur.

Traditional SEO Metrics vs AI Visibility Metrics Compared

Traditional SEO vs GEO metrics still matter, but they only describe performance inside search engines.

AI visibility metrics answer a different question: whether AI systems include your brand at all when buyers ask for recommendations or explanations.

Here’s how traditional and AI visibility metrics compare at a glance:

Why AI Search Visibility Metrics Matter Now?

AI is no longer a fringe discovery tool.

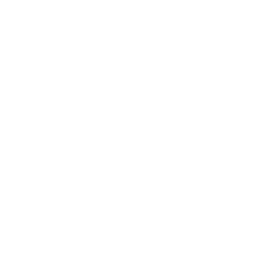

According to McKinsey, 44% of users say AI-powered search is their main and preferred source of insight.

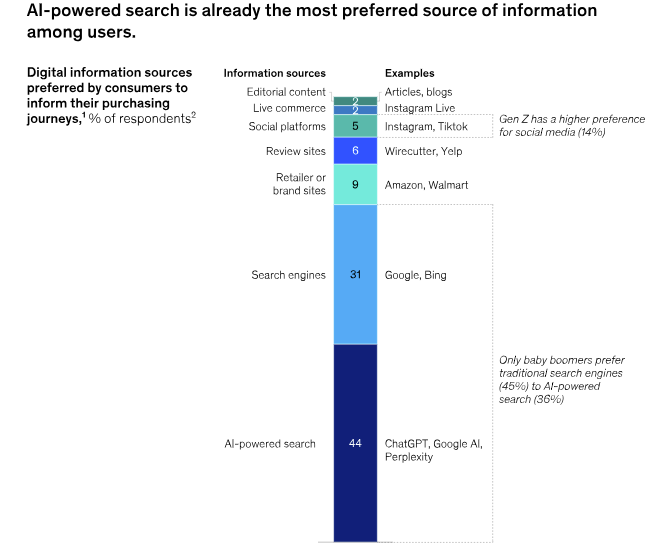

Additionally, consumers now use AI-powered search across their entire consumer decision journey.

As such, AI summaries increasingly replace traditional search results, making it vital to understand how to rank in AI Overviews.

Here are the core reasons AI search visibility metrics have become crucial for modern marketing and growth teams.

1. Mentions Matter More Than Rankings

Pew research shows that users are less likely to click on links when an AI summary appears. This means many searches now end without a website visit.

Gartner also predicts that traditional search volume could decline by up to 25% by 2026 as AI answers intercept more queries.

On average, only 12% of AI citations rank in Google's top 10 for the original query, highlighting the growing importance of Generative Engine Optimization.

In this environment, visibility is no longer about position. It is about whether your brand is included in the answer users actually read.

2. AI Search Is Fragmented Across Platforms

AI systems do not consistently produce the same answers to the same questions. The same query can produce different brands, sources, and recommendations depending on the AI interface being used.

For example, a simple search for “Best payroll software for remote teams” yields varied results across ChatGPT, Perplexity, and Gemini.

That means visibility in one system does not translate to visibility across all buyer search channels.

Without tracking presence across platforms, you risk assuming coverage where real gaps still exist.

You can use a tool like WorkDuo to track your brand across ChatGPT, Perplexity, Gemini, and beyond using AI mode SEO tracking tools. The platform uses real-time tracking to provide the visibility you need to protect your reputation and grow your presence.

Sign up today and see how this works in practice.

3. Citations Are the Most Practical Lever You Have

AI systems extract responses by pulling from sources they already trust.

While you cannot control models or prompts, you can influence where and how your information appears across the source ecosystem.

Tracking citations shows which sources AI systems rely on and where gaps exist.

Improving coverage and clarity across those sources increases the likelihood of inclusion in AI-generated answers.

4. Attribution Breaks Before Conversion Happens

AI influence rarely shows up as a clear referral.

Your brand may be mentioned in an AI answer, build familiarity, and only see the impact later through branded search, direct visits, or offline decisions.

Traditional analytics often miss this early influence.

AI visibility metrics help you determine whether your brand was present upstream, even when conversions occur elsewhere.

What AI Search Metrics Should You Use for Benchmarking?

AI search visibility benchmarks focus on how often, where, and how consistently AI systems surface your brand when users ask questions that influence decisions.

Here are the key metrics you should use for benchmarking:

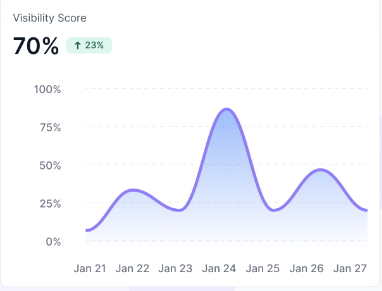

1. Visibility Score

The visibility score measures how often your brand appears in AI answers across a defined set of prompts. It shows whether you are present where AI-driven discovery actually happens.

Why it matters:

- Establishes baseline AI visibility

- Reveals gaps where competitors appear, and you do not

- Makes progress measurable over time

Example:

Say out of 100 prompts like “best payroll software for remote teams,” your brand appears in 42 AI answers. Your visibility is 42%.

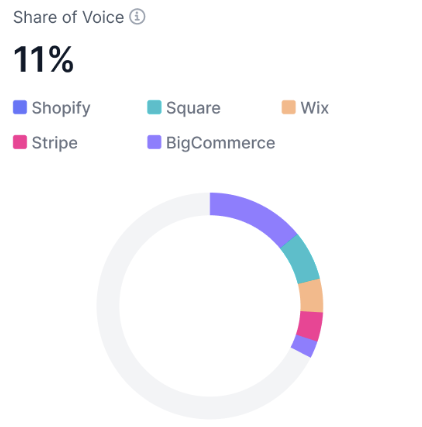

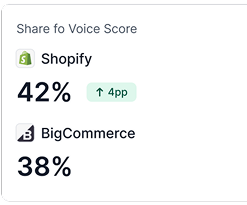

2. Share of Voice (SOV)

Share of Voice measures how often your brand appears in AI answers relative to competitors.

While visibility shows if you are present, SOV shows whether you are winning attention.

It is calculated as:

SOV (%) = (Your brand mentions ÷ Total brand mentions across all tracked brands) × 100

Why it matters:

- Puts your visibility in a competitive context

- Shows who AI systems favor for a topic

- Helps prioritize gaps against specific competitors

Example:

Say across 100 “best payroll software for remote teams” prompts, Brand A appears in 42 answers, Brand B in 28, and your brand in 30. Your AI SOV is 30%.

3. Position or Placement

Position or placement tracks where your brand appears within an AI answer, not just whether it appears at all.

Being mentioned earlier generally signals higher relevance.

Why it matters:

- Earlier placement increases recall and perceived trust

- Helps distinguish strong visibility from weak inclusion

- Adds depth beyond simple mention counts

4. Sentiment

Sentiment measures how your brand is described in AI responses.

Being included negatively or with caveats is very different from being recommended confidently.

Why it matters:

- AI language shapes perception, not just awareness

- Neutral or negative framing can suppress intent

- Positive sentiment reinforces trust and preference

In benchmarking, sentiment helps distinguish helpful visibility from exposure that may actually hurt consideration.

AI Search Visibility Metrics Summarized

The table below summarizes the core AI search visibility metrics you can use to benchmark how AI systems surface, prioritize, and describe your brand.

Understanding these metrics is the first step. Consistently tracking them is what drives action.

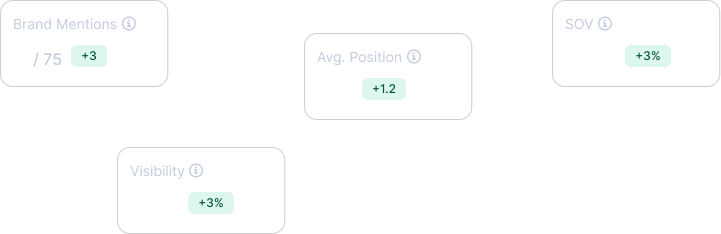

WorkDuo brings visibility, share of voice, position, and sentiment into one view. This shows:

- How often AI systems surface your brand

- How you compare to competitors

- Where you appear in answers, and how you are described

Sign up today to see your brand’s AI search visibility in real time.

How to Build Your AI Search Visibility Benchmarks?

Building AI search visibility benchmarks is about creating a repeatable way to measure how AI systems represent your brand over time.

Here's how to do that step by step.

Step 1: Define the Prompts You Want to Benchmark

Start by deciding which AI questions matter. These prompts define what visibility means for your brand or client.

With a tool like WorkDuo, you create a fixed prompt list based on real buyer intent.

- Use questions buyers would actually ask AI systems

- Prioritize decision and comparison queries

- Keep wording consistent so results are comparable over time

Step 2: Organize Prompts by Intent

Next, structure prompts so insights are easy to read and act on.

A tool like WorkDuo lets you group prompts by intent across the buyer journey.

- Separate BOFU, competitor, pain point, and brand prompts

- Keep intent categories clean and distinct

- Reuse the same structure every reporting cycle

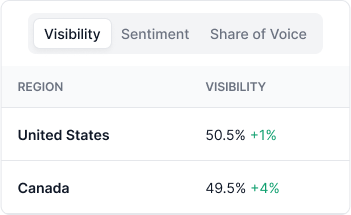

Step 3: Select AI Platforms and Regions

AI answers differ by platform and location. Benchmarks only work when tracking conditions are clearly defined.

With a tool like WorkDuo, you lock in where visibility is measured.

- Select the AI systems that matter to your audience

- Choose regions if results vary by market

- Keep platform and region settings fixed

Step 4: Capture Your Baseline Snapshot

Once everything is set, take your first snapshot. This becomes your reference point.

A tool like WorkDuo records how AI systems currently represent your brand.

- Capture visibility score, share of voice, position, and sentiment

- Treat this as a baseline, not a judgment

- Use the same setup for every future check

Step 5: Set Your Benchmark Ranges and Review Cadence

Decide what “good” looks like before trying to improve anything.

- Set target ranges for visibility, share of voice, placement, and sentiment

- Agree on a consistent review cadence (weekly or monthly)

- Keep benchmarks directional, not absolute

This avoids reacting to short-term swings and keeps focus on trends.

Step 6: Diagnose Why AI Represents You the Way It Does

Use your benchmark data to understand why AI systems surface your brand as they do.

- Compare prompts where you appear vs where you do not

- Look for patterns in competitors that are consistently mentioned

- Pay attention to framing and sentiment, not just inclusion

This step turns metrics into insight.

Step 7: Use Insights to Guide Action

Only after diagnosis should you decide what to change. You can choose to:

- Adjust content, data structure, or coverage based on gaps

- Align teams around specific visibility goals

- Re-check benchmarks using the same setup to measure impact

This closes the loop between measurement and action.

Make Your AI Search Visibility Benchmarks Measurable With WorkDuo

If you can’t measure how AI systems talk about your brand, you can’t improve it.

AI search visibility requires clear benchmarks, consistent tracking, and a way to see how mentions, placement, and sentiment change over time.

WorkDuo provides brands and agencies with these AI search visibility benchmarks.

You can reliably define success and monitor AI search visibility across platforms, whether you are managing your own brand or your clients’.

Get started with WorkDuo to track your AI search visibility today.

Ready to try our product?

Sign up for a free consultation with our experts.

Follow Us on Social Media

Follow our profiles for daily updates, behind-the-scenes content, and more.

More articles

.png)