AI Search Volume: How Demand Works in AI Search (2026 Guide)

- AI search volume is the estimated frequency with which topics/questions are entered into AI tools like ChatGPT, Perplexity, and Google AI surfaces, typically modeled as intent clusters rather than exact keywords.

- Traditional search volume breaks in AI search because there’s no single repeatable query; prompts are longer and vary by context, constraints, and preferences.

- Outcomes differ because AI can answer without clicks and pulls from many sources, so success is measured by mentions, citations, and recommendations, not rankings.

- Analyze it by tracking a fixed intent set over time and measuring presence, position, share of voice, citations, sentiment, framing, and competitor swaps, then prioritizing fixes.

AI search volume sounds simple, but it does not behave like classic keyword volume. One intent can turn into a stream of prompts, rewrites, and follow-ups inside a single conversation. The old model of “one keyword, one volume” no longer holds.

This article breaks down what AI search volume means, why traditional search volume breaks, and how AI search metrics differ from classic SEO numbers.

We will look at prompt volume vs. search volume, why prompt volume is tough to pin down, how one intent can become many prompts, how to analyze AI search data, how teams should think about demand in AI search, and how to track what really matters with a high-quality AI search tool.

What Is AI Search Volume?

AI search volume refers to the estimated number of times people enter specific queries, questions, or topics into AI-powered tools and search interfaces such as ChatGPT, Perplexity, and Google’s AI Mode or AI Overviews over a set period.

Unlike traditional search volume, which tracks how often someone types a short keyword into Google, AAI queries are typically longer, more conversational, and driven by intent. This fundamental shift is at the heart of the SEO vs GEO debate, where the goal moves from ranking for a keyword to being the cited answer for an intent.

AI search demand exists, but it can behave differently from keyword-based search because these interactions do not always create the same click patterns or clear, visible rankings.

Why Traditional Search Volume Breaks in AI Search

Here’s why the usual keyword-based search volume doesn’t translate cleanly to AI-driven search experiences.

1. No Single “Query” To Count

Traditional search volume counts exact keyword matches. AI search volume is typically modeled around intent clusters, where different prompts targeting the same outcome are grouped together.

For example, someone who wants resume help might ask:

- “How do I write a resume for my first job?”

- “Best resume format for fresh grads.”

Although the wording differs, the intent is the same, so both prompts roll up into a single cluster, such as “entry-level resume with no experience.”

2. Prompts Are Longer, Messier, And More Variable

In classic SEO, people often search using short phrases. In AI-led search, prompts are more conversational and packed with context (role, location, constraints, preferences), with queries averaging 23 words.

That creates countless unique prompts for the same need, so the volume of a single keyword becomes less meaningful.

3. The Model Can Answer Without Clicking

Traditional search volume roughly correlates with click potential. In AI experiences (ChatGPT, Perplexity, Google’s AI surfaces), the answer can be delivered inside the interface. Demand still exists, but it may not translate into the same number of visits or rankings because the “win” is included in the answer.

4. Retrieval Is Blended Across Many Sources

AI engines pull from multiple sources of content; it's not just written content and backlinks, and they:

- Reformulate the prompt to interpret intent

- Retrieve from multiple web sources (not one index)

- Summarize and rewrite into a single response

That means “search activity” isn’t always hitting an index you can measure like Google’s.

5. Discovery Happens Through Recommendations, Not Rankings

Keyword volume assumes you’re trying to rank a page for a term. In AI search, you’re trying to be:

- Mentioned

- Cited

- Recommended

6. Legacy Volume Tools Break In AI Search

Legacy SEO platforms like Ahrefs and Semrush were built to estimate Google keyword demand, not AI prompt demand. Even for traditional search, their organic traffic estimates can be 48-62% off versus Google Search Console, so “volume” is already noisy before you apply it to AI search.

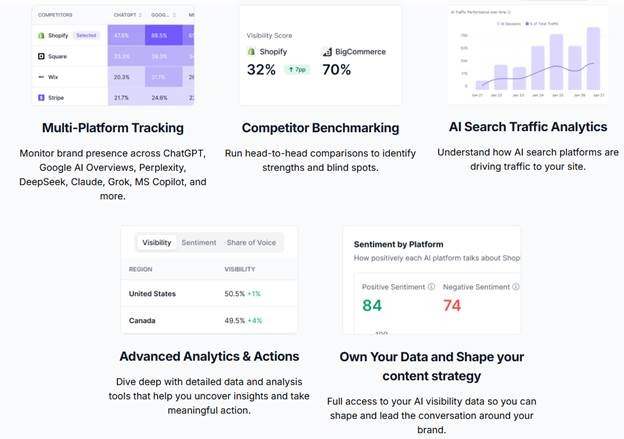

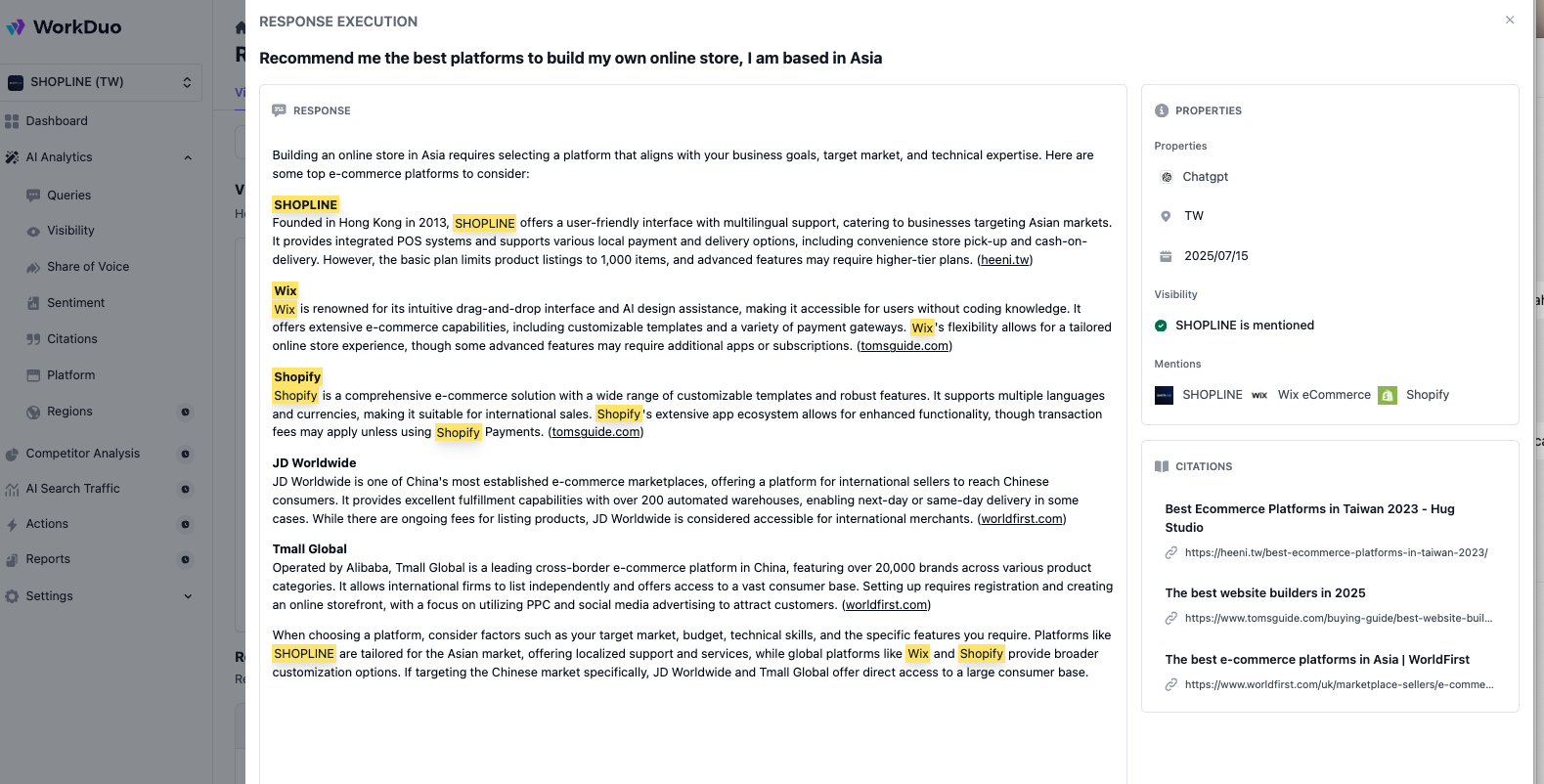

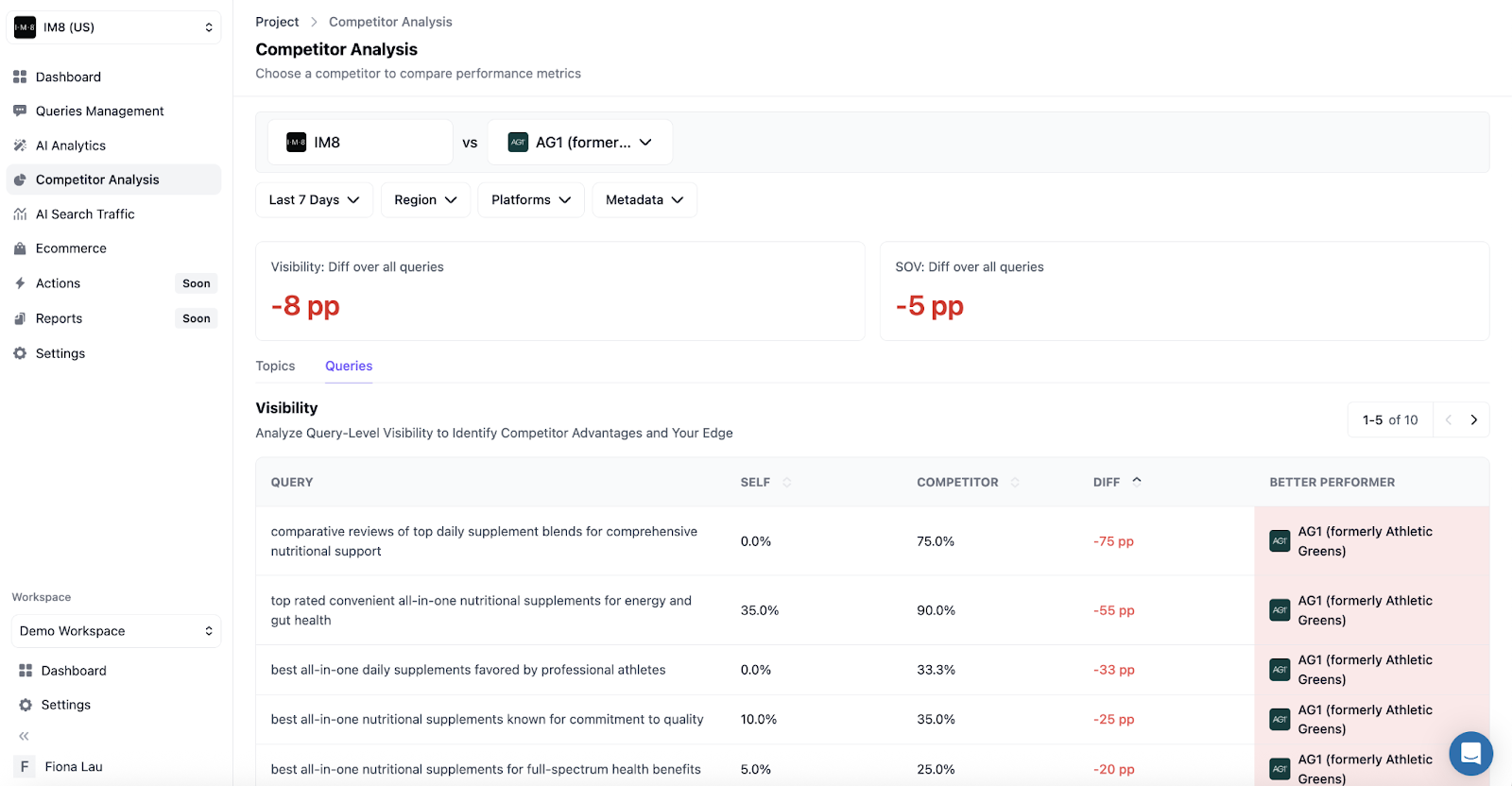

To measure AI visibility more accurately, AI-native platforms (like WorkDuo) track signals such as:

- Multi-platform tracking

- AI presence and position

- Sentiment themes

- Competitor benchmarking

- Share of voice

How Does AI Search Volume Differ From Traditional Search Metrics

Let’s break down how AI search volume differs from traditional search volume in practice.

Prompt Volume vs Search Volume

To set the baseline, let’s separate prompt volume from traditional search volume.

Why Prompt Volume Is Difficult to “Measure”

Prompt volume is hard to “measure” because AI search is not organized around repeatable keywords. It is structurally open-ended, context-heavy, and often multi-turn, so the same intent can generate an endless number of prompt variations.

- Infinite phrasing: Small wording changes create new prompts, even when the intent is identical.

- Context packed into the query: People add goals, constraints, and preferences, which multiply variations fast.

- Personalization: Outputs and follow-up questions vary by user, history, and the conversation path.

- Multi-turn behavior: Demand is often spread across a sequence of prompts rather than a single query.

How One Intent Becomes Hundreds of Prompts

One intent can turn into hundreds of prompts because different people ask with different contexts, constraints, and preferences, even when the goal is the same.

Example of Intent: Find the best AI tool to summarize and analyze customer support tickets.

Persona

- “I am a support lead. Which AI tool can summarize weekly ticket themes for my team?”

- “I am a CX manager. What AI tool helps turn ticket data into monthly insights for leadership?”

Constraints

- “Recommend a tool under $200 a month that can summarize tickets.”

- “We cannot use tools that store customer data. What are privacy-safe options?”

Context

- “We handle tickets from email, WhatsApp, and live chat. Which tool can unify and summarize all channels?”

- “We use Zendesk. What AI tools plug into Zendesk to analyze tickets and reduce repeat issues?”

Preferences

- “I want dashboards and trend charts, not just summaries. What should I use?”

- “I prefer a lightweight tool that outputs a simple weekly report in Google Docs.”

Tone and style

- “Give me a quick shortlist and your top pick, no long explanations.”

- “Explain like I am new to analytics and show me how to start.”

Combining these variables creates hundreds of unique permutations.

How to Analyze and Interpret AI Search Data

Use AI search signals to spot real demand, visibility gaps, and recommendation opportunities.

1) Start With an Intent Set (Not Keywords)

Begin with the tasks people use AI search for. This becomes your baseline.

Pick 5-10 intents tied to outcomes (leads, trials, sales, shortlisting).

For each intent, write 5-15 prompt variants that reflect real constraints (budget, region, compliance, integrations).

Common clusters:

- Best X for Y (best CRM for mid-market SaaS)

- Compare A vs B (HubSpot vs Salesforce for small teams)

- How do I choose (how to pick a PM tool for marketing)

- Pricing/alternatives (Notion pricing, Notion alternatives for enterprise)

2) Keep Runs Comparable

For every prompt run, log the engine or model, location, language, and date or time so you can compare like-for-like over time.

Small differences in setup can change the answer, the sources cited, and which brands appear.

Without consistent conditions, it becomes harder to interpret whether visibility changed because of your work or because the test setup changed.

3) Measure AI Visibility the Way AI Works (Not “Rank”)

Traditional “rank” doesn’t translate cleanly. In AI answers, the real question is: Do you appear, how are you framed, and are you selected?

Track signals like:

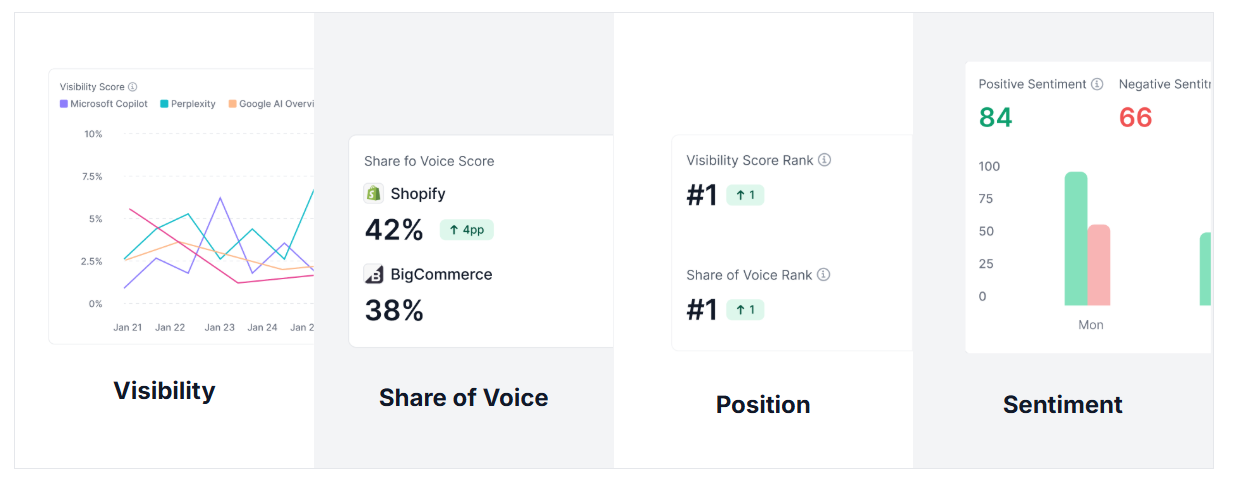

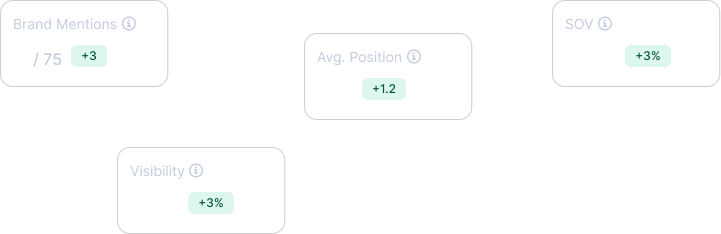

- Mentions: How many times are you mentioned across responses?

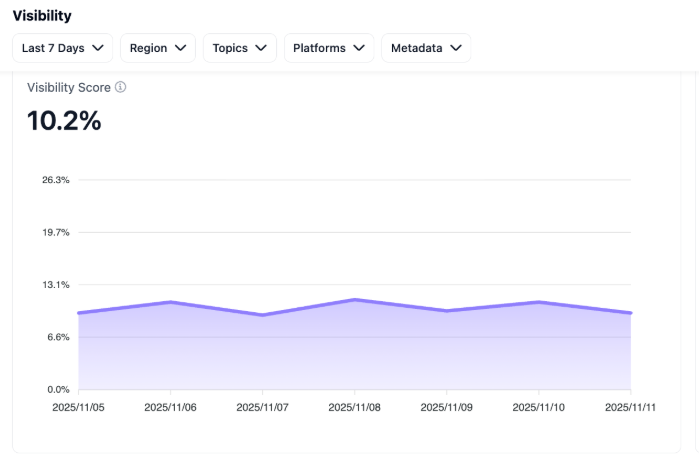

- Visibility: How often do you get mentioned at all (as a % of responses)?

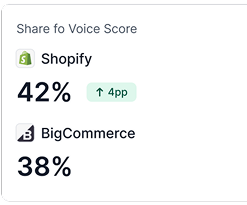

- Position: Where do you show up (top, middle, footnote)?

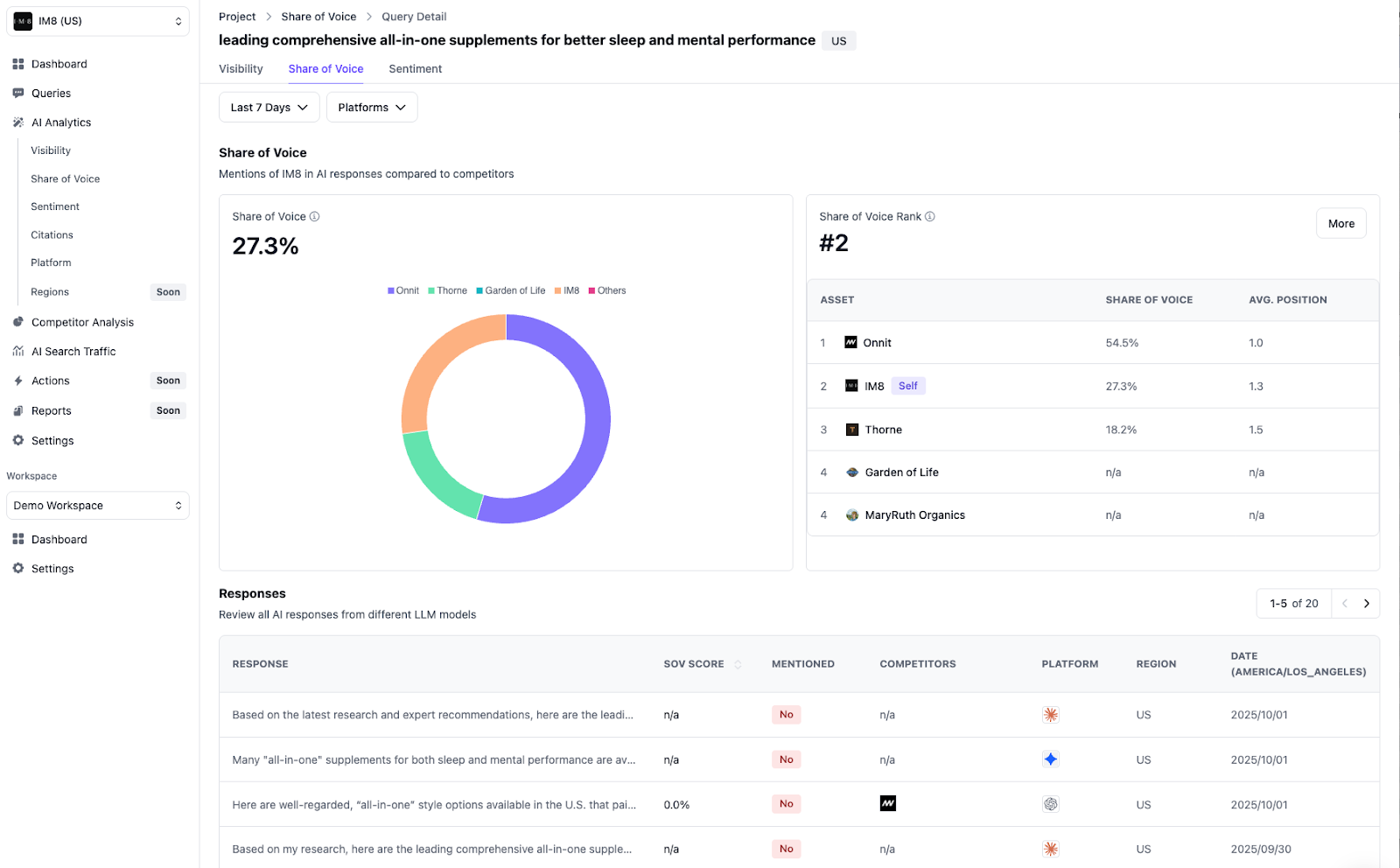

- Share of Voice: When brands are mentioned, how often are you chosen versus competitors?

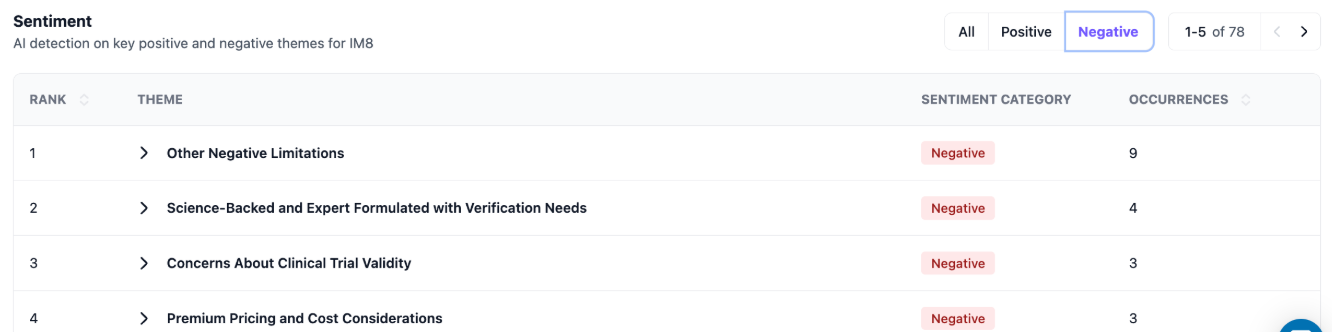

- Sentiment: How is your brand talked about (positive or negative, and why)

- Framing: What the AI says about you (strengths, weaknesses, “best for”).

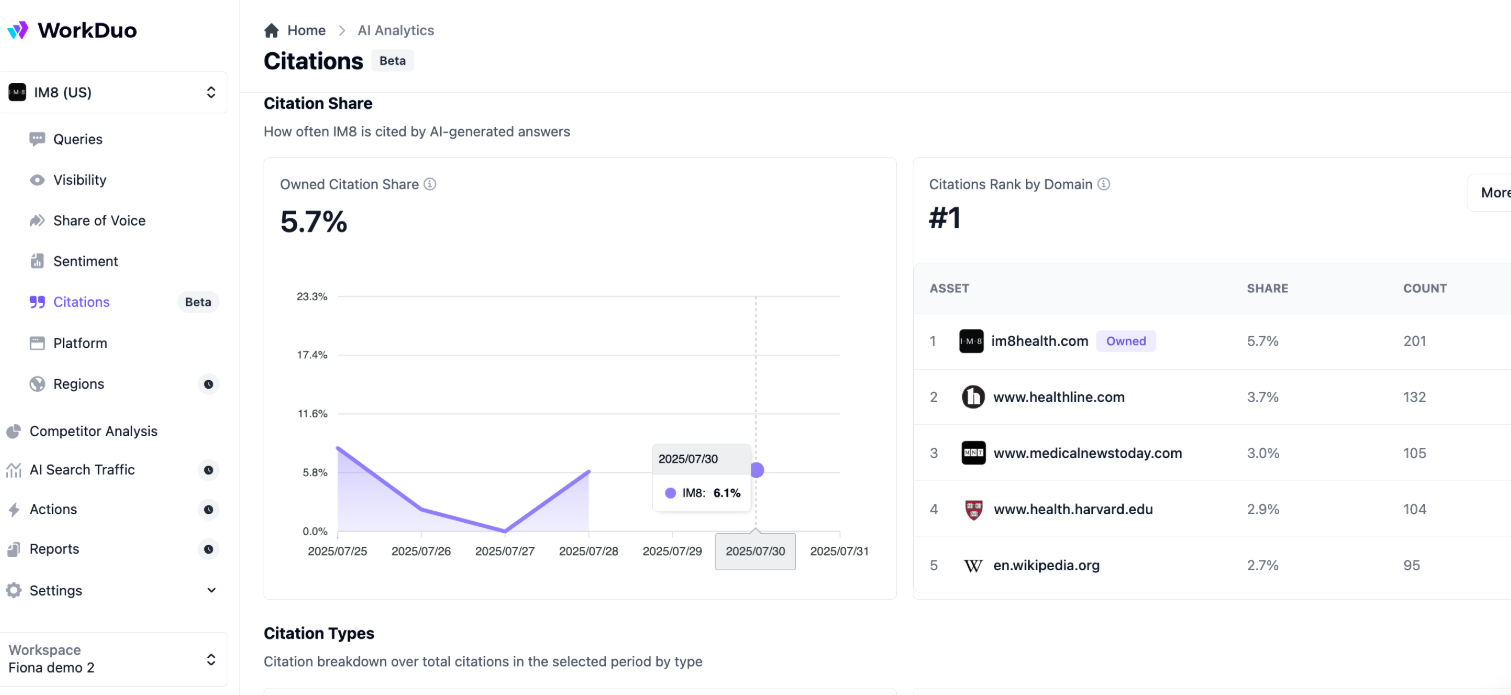

- Citation: Are you linked/cited as support?

- Competitor substitution: Who takes your spot when you’re absent?

4) Separate Visibility From Value

Don’t treat mentions like wins. Split reporting into:

- Visibility outcomes: Presence, recommendation, citations, framing.

- Business outcomes: Clicks (when available), branded searches, demos, trials, leads, sales.

5) Diagnose Why the AI Answered That Way

Look for repeatable drivers:

- Missing/unclear facts (pricing, specs, availability, integrations)

- Unclear positioning (who it’s for, what it replaces)

- Weak third-party proof (reviews, comparisons, authority sources)

- Hard-to-extract pages (messy structure, vague claims)

- Competitors with clearer “best for” statements and comparison coverage

6) Turn Findings Into a Priority List

You want a simple, repeatable way to decide what to fix first:

- High-intent + low presence = urgent discoverability gap

- High presence + negative framing = messaging/proof gap

- Good presence + no citations = authority gap

- Cited but not recommended = positioning/comparison gap

- Present only in low-intent prompts = coverage gap (wrong intent mix)

7) Track Improvements Like Experiments

Change one thing at a time, such as pricing clarity, comparison content, proof, or structure, then re-run the same intent set weekly or biweekly.

This makes it easier to interpret which changes influenced mentions, citations, or positioning. If you change too many things at once, the results become harder to read with confidence.

8) Interpret What the Data Is Actually Telling You

Weak interpretation:

- “We have 10% AI share of voice.”

Better interpretation (actionable + diagnostic):

- “We’re missing from high-buying prompts in the UK, and when we appear, the AI flags pricing as unclear.

Priority fixes: Add pricing clarity, a comparison page, and third-party reviews; then re-measure recommendation and citation rates.

How Teams Should Think About Demand in AI Search

In AI search, “demand” is less about a single volume number and more about presence and coverage across the intents your audience asks about.

Teams should move from keyword lists to intent maps, then align workflows so content, SEO, PR, and product marketing all support the same goal: being consistently included in AI answers.

- Define your priority intents (that drive revenue) and the key variations (persona, constraints, format, tone).

- Measure coverage with visibility rate, citation rate, share of voice, positions in AI answers, and sentiment framing, plus downstream conversions when trackable.

- Operationalize it with a repeatable cadence: A tracked prompt set, competitor benchmarks, and clear owners for fixes.

Demand still exists. The strategy shifts from “rank for a phrase” to “be the trusted option across an intent.

Track What Actually Matters in AI Search With WorkDuo

WorkDuo helps you track AI search the way AI actually works. Monitor visibility and share of voice across priority intents, see where you are mentioned or cited, and compare performance against competitors.

Then turn patterns in framing, sentiment, and missing proof into a clear list of fixes you can re-test over time.

Start measuring your AI visibility with WorkDuo and turn GEO insights into repeatable action.

Ready to try our product?

Sign up for a free consultation with our experts.

Follow Us on Social Media

Follow our profiles for daily updates, behind-the-scenes content, and more.

More articles

.png)